by Vaughn Lauer

An interesting title, isn’t it? But the truths are these:

Data (aka behavioral and measurable information, including test scores) are necessary for making good educational decisions.

and

Test scores do not define our child; they simply help to understand our child’s strengths and needs, so that we can make informed decisions.

I think we can all agree on the first statement, that is: Information (data) in behavioral and measurable terms is essential to good decision-making. But we might just question the second “truth,” by asking “Don’t people really define who our children are by test results?”

How do we make sure that who our child is does not get lost in the 15+ pages of text, or in the 50+ test scores found in these reports? Honestly, I remember thinking that it would be easier trying to understand the federal tax codes.

And, as long as we are bound to a school evaluation with jargoned text and slew of seemingly unrelated numbers that are presented in a cumbersome format, we will be limited to what we can understand. And worse, we can lose sight of who our child is.

IF ONLY…

Evaluations are not yet presented in a video format where we can see and listen to how the various test scores relate to each other, or help us in any way to better understand our child’s needs and strengths. It would be so much easier if only we could see these test scores in a more useful way, don’t you think?

Well, there is a way. Let me explain, by showing you the test results found in one educational report. Although there is much information, I will focus on just one area of the report to show how test scores can make sense and to all members of the school/parent team.

ANOTHER WAY TO LOOK AT EDUCATIONAL REPORTS

A good beginning to understanding school and independent educational reports is to start with cognitive assessment results. Many might call them IQ tests, but the IQ score is NOT what I am referencing, because that single test score is useless in understanding a child, or anyone. However, there are many subtests found in cognitive tests, i.e., WISC, DAS, WJ or any other such test, and it is these subtests that help us begin to understand some of the cognitive processes being measured—just not in the way they are most often presented in evaluation reports. Let me show you what I mean by showing you another way to look at the educational reports.

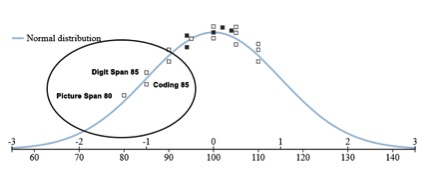

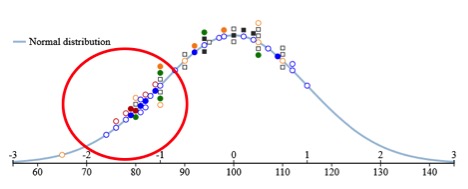

AH, THE OLD BELL CURVE, BUT WITH A TWIST

In Figure 1, not only can you see the test scores of one cognitive test (the Wechsler Intelligence Scale for Children-Fifth Edition) displayed graphically, but you can also see the large range of scores with higher scores on the right side and lower scores on the left. We all have a range of skills, some in a narrower band of differences and some that are greater. But, seeing the range of scores helps us literally begin to see these differences in a much better way than in a text- and number-only format. But, this is only the first of several tests that were administered to this one child and sets a basic representation of several cognitive skills.

Figure 1 WISC-V Test Scores

I will not discuss all subtests, but I want to bring your attention to the circled scores. These scores indicate that this child has two major areas impacting learning—one is her working memory and the other is her ability to process information quickly. Although the words “memory” and “processing” are not stated in the subtest names, those subtests in bold text are measures of both of those skills.

BUILDING A PICTURE: TEST BY TEST

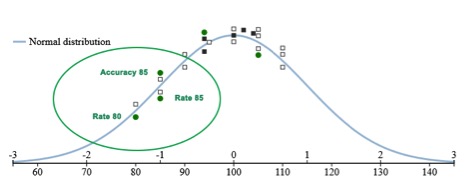

Figure 2 GORT Test Scores

Figure 2 shows additional test scores (and another range of scores) of the Gray Oral Reading Tests, Fifth Edition (GORT-5) that measures skills of oral reading fluency among others. Interestingly, the new scores found within the circled area also represent measures of working memory and processing speed, further indicating these areas as “weaknesses.” They are measuring different skills but are still closely aligned to the cognitive test scores originally found within the circled area.

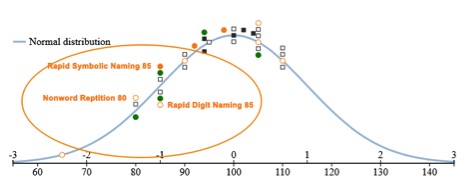

In Figure 3, scores of the Comprehensive Test of Phonological Processing, Second Edition (CTOPP-2) have been added and, not surprisingly, we continue to see subtests related to working memory and processing speed that cluster with other tests measuring the same skills, but differently. It’s becoming quite clear that several tests are revealing the same areas of concern, measured in different ways with one set of scores confirming the other.

Figure 3 CTOPP Test Scores

All tests so far have measured cognitive and processing skills. What’s missing is knowing how they are impacting learning—those academic skills that we see at home and in the classroom.

Figure 4 shows the addition of two achievement tests (KTEA and WIAT), showing how a range of academic scores lays across the bell curve again indicating a large range of needs and strengths, with many found within the red circle.

Figure 4 Distribution of all Test Scores

Looking more closely, we see in Figure 5, and with no surprise, academic tasks that involve a strong working memory and/or speed (quick processing of information) are adjacent to those processes as academic needs. And what we can see is what the report was attempting to explain to us, but now in a way, that makes it easier to understand.

Now, not only do we know what academic areas are in need of instruction, we also know the underlying processes that need to be strengthened for learning to take place.

Figure 5 Zoomed View of Figure 4

This is an “AHA” moment for sure and the school team can quickly see three important pieces of information. The first is how the test scores reveal ranges of abilities. The second is how multiple subtest measures of cognition and skills of processing begin to cluster together. And finally, when we added measures of academic skills i.e., reading, math, spelling etc., the team is then able to make informed decisions, because they will understand how all the tests relate to each other and how the underlying processes impact learning. This is also necessary for determining eligibility and for developing needs-based IEPs.

You might be saying to yourself something like, “Wait, I am not a psychologist, so I don’t know how to explain all this to anyone.” And you would be right—you are likely not a psychologist. You don’t need to be.

You simply need to know how to show the test scores to the school team, as I did here. Then state what you learned from looking at the test scores, noting which scores show apparent strengths and needs and then ask if the school team members see the same things you do, building the visual presentation, one test at a time.

I know you already know the answer to that one—its YES! It’s YES! because they (each of the team members, not just one or two) can literally see the relations among the test scores and learning, making them informed. Informed teams make much better educational decisions than uninformed teams. And the two truths I mentioned at the beginning of the article should be much more believable now.

You can do all of what I just presented (and more) by using a program called the Special Education Decoder System (SEDS). This program is being successfully used by parents and advocates at IEP meetings across the U.S. and in Canada. And you can try out a limited version by visiting https://specialeducationdecoder.com and clicking on Free Trial. The trial version is limited to three specific tests, and I do suggest using the test scores provided, so that you can again observe how they cluster around needs and strengths.

Be certain to check out what tests are currently included in the full version. Also view the manual, so that you can see all of the program components available within this SEDS program.

NOTE: Keep in mind that, if you are new to the “world of special education,” you might well be overwhelmed with all of the “technical talk” in the reports. That is quite typical and if you do not have experience with statistical terms or have familiarity with some commonly used tests, this program could easily fail to meet your needs. This is a tool to help understand the test scores, but it does not analyze the tests or provide a report of the meaning of the tests. If in doubt, seek out a friend, professional or advocate who can help you make the best use of this tool.

Or, if you have questions, please contact me directly at Vaughn.Lauer@verizon.net.

eParent has not evaluated the merits of this software and is presenting it strictly as an educational option. eParent does not have any financial connection or relationship with the company and welcomes input from the educational community regarding its efficacy, application and value. Rick Rader, MD, Editor in chief, Exceptional Parent Magazine